I. Introduction

II. Method

2.1 Participants

2.2 Music stimuli

2.3 Measures

2.4 Procedure

2.5 Data analysis

III. Results and discussion

3.1 Differences of valence response depending on vocal register, mode and the interaction effect

3.2 Differences of arousal response depending on vocal register, mode and the interaction effect

3.3 Difference of affective responses among the four vocal excerpts

IV. Conclusion

I. Introduction

Affective response towards music listening has long been studied in the emotion and music research domain, leading to specific findings on musical variables which may induce various emotional responses during listening. Existing studies suggest that certain musical elements such as tempo, mode,[1] dynamics,[2] pitch,[3] and rhythm[4] have significant impact on listener’s perceived emotion.

Among many musical elements, some are evinced to have more influence on a particular emotion,[5] such as mode on valence[6] and tempo on arousal.[7] Music is composed of diverse musical elements including rhythmic, tonal and other components (e.g., timbre, texture, form). It is learnt that the rhythmic components mostly govern listener’s energy level, such as tempo,[1] while the tonal components mainly rule listener’s mood or feeling, such as mode.[1] The harmonic structure including consonance and dissonance may also evoke different emotional responses from the states of anxiety and tension to the relaxed and calm condition.[8]

Tonal components mainly determine the emotionality of music due to its primary feature, namely mode. Mode is the grounding frame that the tones are selected to form a melody. Thus, depending on different mode, the melody constitutes the emotional atmosphere for the music. It is examined that music in minor mode creates melancholic and sad feelings whereas the major mode generates gay and joyful atmosphere in music.[9]

As one of the tonal components, melody is a significant musical element composed of a series of tones with different pitches. Scholars propose that the melodic range can bring different emotional responses. They state that the high pitch range is associated with happiness, anger and fear, while the low pitch range is linked to sadness and tenderness.[10] In addition, the pitch range variations were found to have impact on perceived valence and arousal of the listener.[3]

Voice is one of the important timbral element in vocal music. In instrumental music, the musical timbre depends on the material that the instrument is made of and what the particular instruments are used in a piece of music. That is, the timbre can be varied when the same music is played by one or multiple instruments.[11] Similarly, voice is a human instrument that may create music along with other instruments. Vocal music has particular impacts on musical expression and articulation, as voice may sing with lyrics. A song sung by different genders (e.g., male, female), different numbers of singers (e.g., solo, chorus), using different vocal techniques (e.g., head voice, bel canto) or different singing styles (e.g., fine art song, popular song) may lead to listener’s different emotional responses.

With regard to the measurement of listener’s emotional response, although various measuring tools have been developed, the dimensional model of emotion (i.e., valence and arousal) is what seems to be the most apt for evaluating affective responses induced in music listening.[12,13,14] As mentioned above, the rhythmic components chiefly determine the energy level which coincides with the arousal status in the dimensional model, while the tonal components influence the affective traits which match the valence level in the model.[1]

It is noticeable, however, that these findings are based on the existing literature with respect to instrumental music. In vocal music, the singer’s voice serves as the timbre of the music, being like a musical instrument. Since singing high or low may determine different vocal timbres, the vocal range is also an essential feature to direct listener’s emotional response. Therefore, this study decided to examine whether there is any difference in affective responses towards the vocal music sung in different vocal ranges. In order to control the modality of the music, the musical excepts were presented in both minor and major modes.

Accordingly, there were two research questions as following.

1. Are there significant differences in the perceived valence depending on vocal register, mode, and the interaction effects between vocal register and mode?

2. Are there significant differences in the perceived arousal depending on vocal register, mode, and the interaction effects between vocal register and mode?

II. Method

2.1 Participants

A total of 188 female university students aged from 19 to 34 years old (mean age = 24.13, SD = 3.12) participated in an online survey experiment. Among the original 228 participants, 22 participants were excluded for their incompletion of the survey questionnaire, and 1 non-binary gender and 17 male participants were removed from the data analysis for consideration of insignificant gender ratio. Regardless of education level or major, there were 147 non-musicians (78.19 %) and 41 musicians (21.81 %). All participants agreed to take part in this survey voluntarily and were noticed with the research subject, purpose, procedure, confidentiality, the experimenter’s contact information, and that they could end the survey at any time during their participation prior to the commencement of the survey. They were given a digital coupon of coffee or a snake after completing the survey.

2.2 Music stimuli

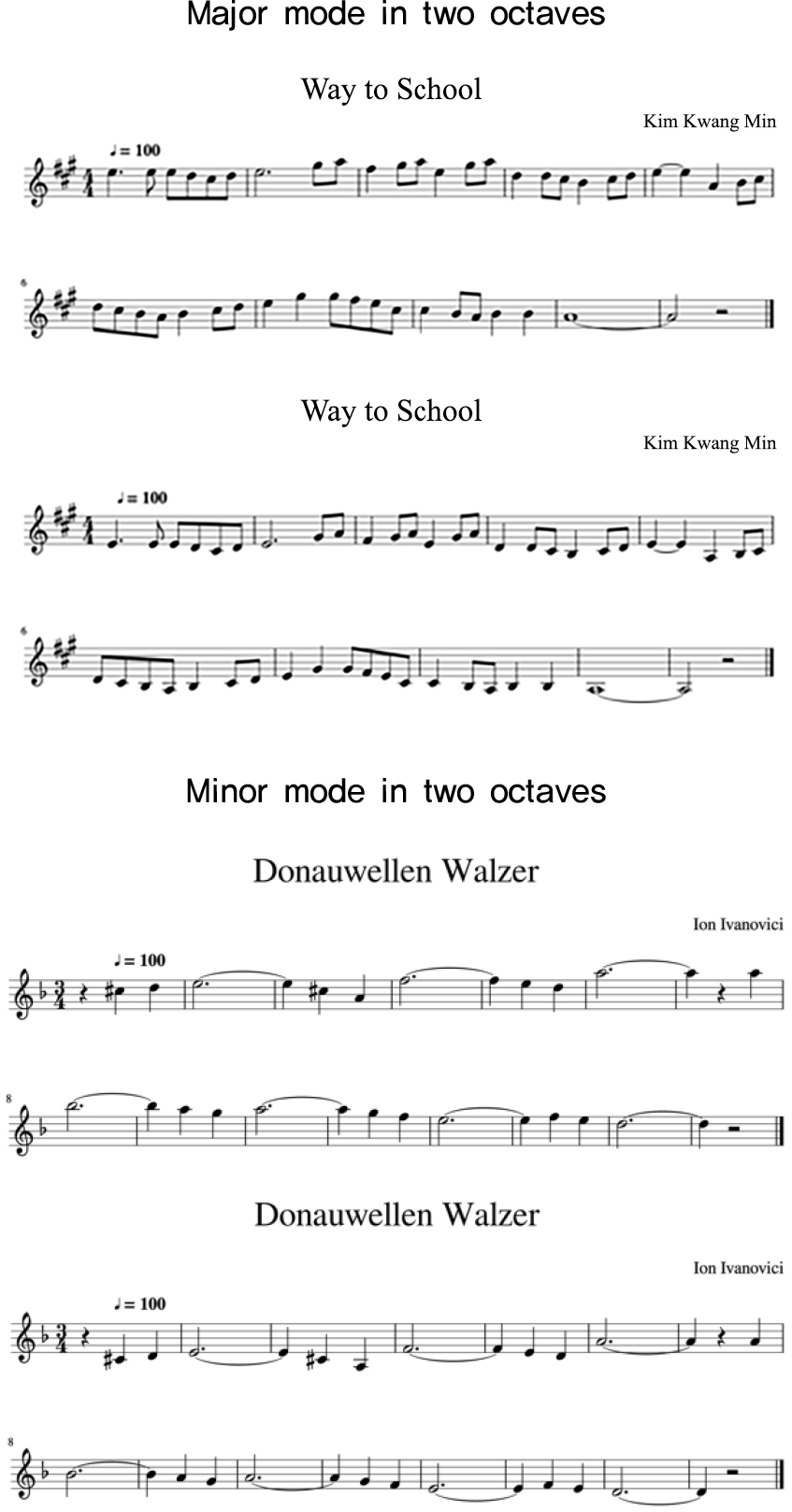

The study employed one major and one minor melodic piece that were sung in a higher and a lower vocal register respectively by a male singer who was vocally trained as a Baritone. The male voice was selected due to his vocal ability of covering over three tonal registers when singing. Two pieces of abridged songs were selected: one in major mode and another in minor mode. Each of them was sung in two different tonal registers correspondingly. The two songs were selected based on the previous study and hypothesis that high register may associate with high level of arousal and positive affect whereas low register may be linked to low arousal level and negative affect.[10,15] Consequently, four excerpts were generated using two songs with each being sung in two different vocal registers (see Fig. 1).

The songs were sung with a nonsense syllable (i.e., /a:/ vowel) in order to avoid any textual messages that may have affective influence on listeners. Each song was approximately 25 s without accompaniment, and recorded at a professional sound studio. The tempo and duration of the four excerpts were controlled to a similar level by the vocalist during the recording. All recordings were exported via Logic Pro X. Some of the notes in vocal excerpts were slightly adjusted for better appreciation (see Table 1).

2.3 Measures

In this study, a self-report questionnaire and the psychometric calibration “Visual Analogue Scale (VAS)” were implemented to collect participants’ a) demographic information, b) current affective state and c) affective responses of valence and arousal to the four vocal excerpts.

In the survey questionnaire, participant’s agreement of consent, the information with regard to their age, gender, language ability, hearing condition, education level, major, daily music listening hours and vocal music training were collected (See Table 2).

At the second part, the VAS was provided for participants to specify affective response levels by implying a position on the continuum slider bar (from 0 points to 100 points).[16]

Table 1.

Parameters of the vocal excerpts.

Table 2.

Survey questionnaire structure.

2.4 Procedure

A pilot study was carried out in order to trial the feasibility of the measure tools and overall procedure. There were six female postgraduate students (mean age = 27.5, SD = 1.5) majored in music therapy participated in an online survey. Question items were tested and modified for clarity.

For the main study, it was conducted via an online survey tool. Participants were recruited through university teachers and direct invitation in which the advertisement with the research information was presented. The agreement of participating in this study was obtained prior to the questionnaire. Participants were noted to take this survey under the quiet and cosy environment and to use earphones when listening to vocal excerpts.

Participants were guided online to complete the survey process in three sections: a) survey on demographic information, b) ratings on current affective state, and c) ratings on perceived valence and arousal to the four vocal excerpts. The entire participation was estimated 9 minutes. In section c), a sample excerpt of instrumental music (i.e., piano, 16-second, Pop-style) was provided for sound test and volume adjustment ahead of the vocal excerpts. The four vocal excerpts were presented to the participant in a randomised order.

2.5 Data analysis

A two-way ANOVA was performed to analyse whether there were differences in perceived valence and arousal depending on vocal register and mode, and whether there was any interaction effect between vocal register and mode, respectively. The demographic information of participants was enquired into via descriptive statistics. All statistics were conducted on program SPSS (version 22).

III. Results and discussion

3.1 Differences of valence response depending on vocal register, mode and the interaction effect

The results exhibited that there was a significant difference of perceived valence between the vocal registers (F(1, 752) = 8.09, p < .01), modes (F(1, 752) = 108.36, p < .001), and further the interaction effect of vocal register and mode (F(1, 752) = 18.66, p < .001) (See Table 3).

Table 3.

Two-way analysis of variance (ANOVA) on perceived valence. (N = 188)

| Source | MS | F | p | ηp2 | dF |

| Vocal Register (VR) | 3425.57 | 8.09 | .005** | .011 | 187 |

| Mode (M) | 45867.19 | 108.36 | .000*** | .127 | 187 |

| VR * M | 7897.57 | 18.66 | .000*** | .024 | 187 |

The result indicated that vocal register by itself had a significant effect on listener’s valence response, which is partially in line with an earlier study suggesting that higher pitch in singing voice was mostly perceived as pleasantness.[17] This finding implies that musical melody in higher register may be a metaphor for uplifted mood that manifested acoustically. Often, the mood is expressed using spatial metaphor such as feeling high or low, which can be a form of a schematic expression.

Secondly, it was shown that mode had a statistically significant effect on perceived valence, which was congruent with the past researches on mode being associated with valence responses.[6,18,19,20] Also, compared to vocal register, musical mode appeared to weigh predominantly on affecting listener’s valence response, which was consistent with preceding findings.[6,21]

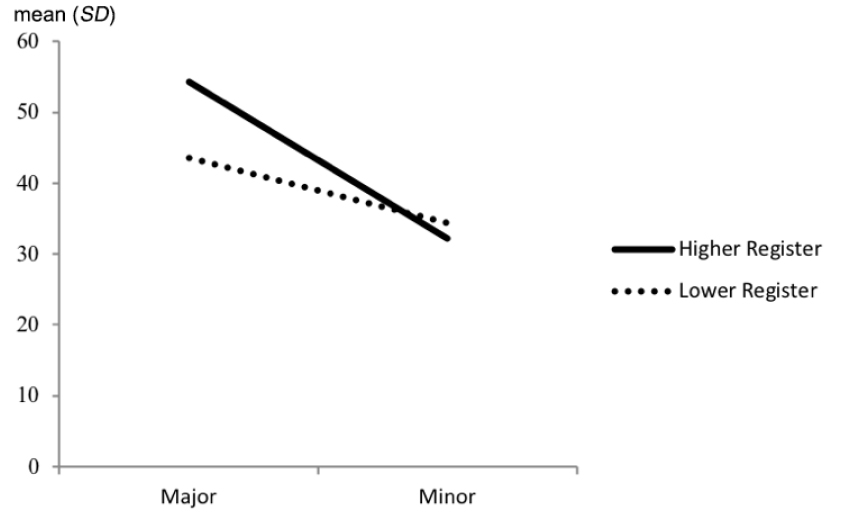

According to descriptive statistics of listeners’ affective responses to the four vocal excerpts, the Estimated Marginal Means (EMM) of valence in Fig. 2 revealed that a) in major mode, the difference of valence rating between higher and lower vocal registers was more significant than the difference in minor mode, b) in higher vocal register, the difference of valence ratings (i.e., solid-line slope) between major and minor modes was more significant than the difference in lower vocal register (i.e., dotted-line slope), c) regardless of the vocal register, valence responses in major mode were both more significant than the ones in minor mode, which was mostly congruent with earlier studies suggesting that major mode elicits valence response more remarkably than minor mode,[19,21] and d) in higher vocal register, the valence response was more positive in major mode and more negative in minor mode, whereas in lower vocal register, the valence response was less positive and less negative in major and minor mode, respectively.

3.2 Differences of arousal response depending on vocal register, mode and the interaction effect

The results displayed that there was significant difference of perceived arousal between vocal registers (F(1, 752) = 28.36, p < .001) and modes (F(1, 752) = 59.13, p < .001). However, there was no statistical significance shown in the interaction effect of vocal register and mode on arousal response (F(1, 752) = .01, p > .05) (See Table 4).

Table 4.

Two-Way ANOVA on perceived arousal. (N = 188)

| Source | MS | F | p | ηp2 | dF |

|

Vocal Register (VR) | 8831.02 | 28.36 | .000*** | .037 | 187 |

|

Mode (M) | 18412.02 | 59.13 | .000*** | .073 | 187 |

| VR * M | 1.82 | .01 | .939 | .000 | 187 |

The result implied that the vocal register which is the pitch related feature had a statistically significant effect on arousal response, which was consistent with a previous study showing that pitch range variation influences perceived arousal to instrumental music.[3]The result also denoted that mode had significant impact on arousal as well. However, this is opposed to a past study’s finding that musical mode had no manipulative influence on arousal when it comes to the instrumental music.[1]

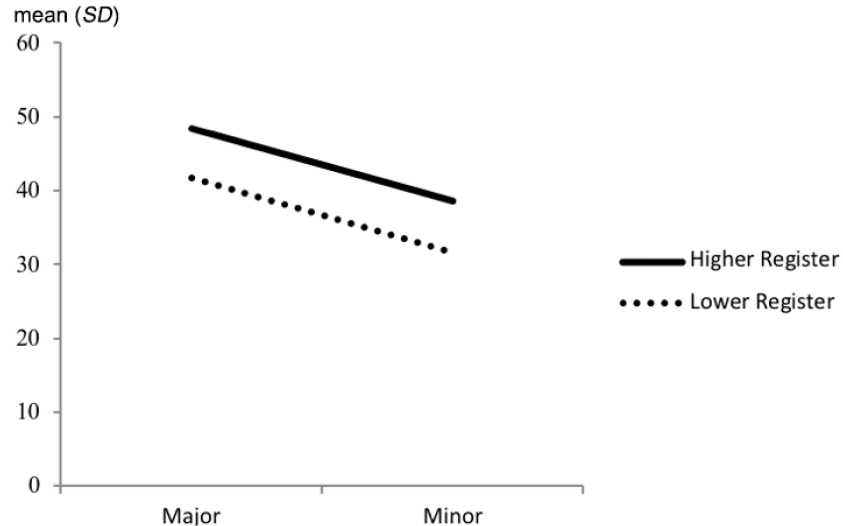

EMM of arousal in Fig. 3 presented two seemingly parallel lines implying that the interaction effects of two vocal registers and two modes had the same influencing patterns on arousal. That is, in major mode, the arousal was perceived higher in higher vocal register and lower in lower vocal register. Similarly, in minor mode, the arousal response was also higher and lower in the corresponding vocal registers. Further, it was seen that the interaction effect of higher vocal register and major mode had the most significant effect on arousal response, while the lower vocal register and minor mode combination had the least on affecting arousal.

3.3 Difference of affective responses among the four vocal excerpts

Based on the descriptive statistics (see Table 5), the excerpt with higher register in major mode (HM) had the highest mean score (mean = 54.23, SD = 17.86) and the excerpt with higher register in minor mode (Hm) had the lowest mean score (mean = 32.13, SD = 20.34) with regards to valence response. The excerpt HM also had the highest mean score (mean = 48.40, SD = 18.50) whereas the excerpt with lower register in minor mode (Lm) had the lowest mean score (mean = 31.65, SD = 18.29) in respect of arousal response. The results reflected that the collaboration of higher vocal register and major mode had the most significant interaction effect on listener’s affective responses (valence and arousal).

IV. Conclusion

This study examined the differences in affective response between high and low vocal registers and musical modes. The results revealed that there were significant differences in perceived valence and arousal depending on vocal register and mode correspondingly. While the significant interaction effect was found in perceived valence, there was no salience shown in perceived arousal. In addition, although mode affecting valence is a widely known convention in the domain of music and emotion,[15] the result implies that the difference in the perception of valence may accompany the perception of arousal level as well. Conclusively, the study suggests that the vocal register, as much as the mode, is an important factor to be considered for music selection, as it is found to induce different responses within the same piece of music.

The limitation of the study involves gender factors related to the vocalist’s voice in musical excerpts. The musical excerpts were recorded using male voice in order to cover two octave vocal ranges, while all participants evaluated were female. Thus, firstly, studies on such a topic including a balanced number of female and male participants are needed. Also, it would be worthwhile to investigate whether the findings will be in line with the ones of this study while hiring a female vocalist to sing the vocal experts.